Motion Detection via Communication Signals

Overview

This project was completed for the Signals and Systems lab course. It explored Passive Coherent Location (PCL) — using ambient communication signals (cellular base station transmissions) as a radar illuminator to detect and characterize human motion, without emitting any dedicated radar signal. The approach leverages Doppler shift analysis and cross-correlation of reference and surveillance signals to estimate target velocity and range.

Results

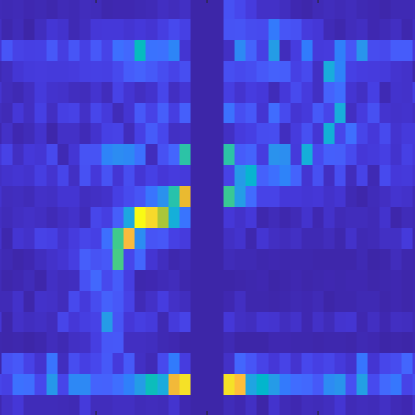

- Successfully recovered Doppler frequency shift vs. time heatmaps from 20 field data files, clearly showing the presence and motion pattern of a human target.

- Estimated target velocity using the ambiguity function peak at each time window, with the geometry of the base station-target-receiver triangle used to resolve the projection angle.

- Extended analysis to micro-Doppler effects as a bonus investigation.

Technical Details

Signal Model:

- Reference signal:

y_ref(t) = α·x(t − τ_r)(direct path from base station) - Surveillance signal:

y_sur(t) = β·x(t − τ_s)·e^(j2πf_D t)(reflected off the target) - Goal: estimate

Δτ = |τ_r − τ_s|(range difference) andf_D(Doppler frequency shift)

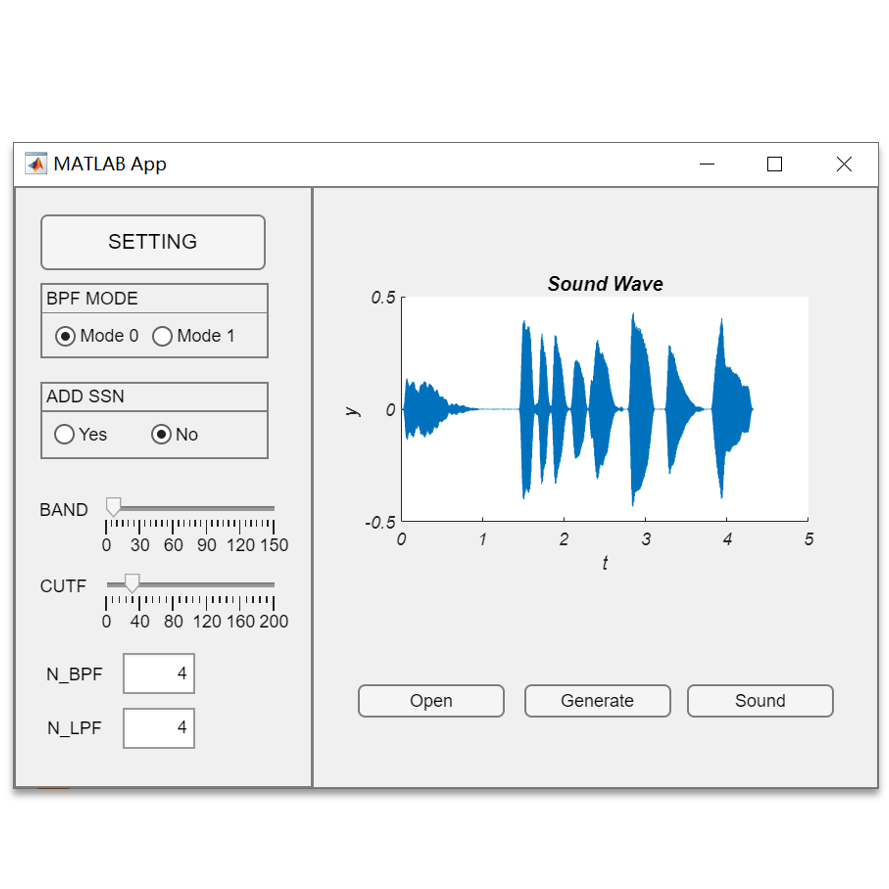

Processing Pipeline (implemented in MATLAB):

- Load data: Read I/Q baseband samples from 20 data files (0.5 s segments, 25 MHz sample rate).

- Digital Down Convert (DDC): Frequency-shifted the 2110–2130 MHz band to baseband to isolate the signal of interest.

- Low-Pass Filtering: Butterworth LPF (order 10, cutoff 0.88×10⁷ Hz) to reject the adjacent 2130–2135 MHz band and reduce sample rate.

- Ambiguity Function:

$$\text{Cor}(\tau, f_D) = \sum_{n=0}^{N-1} y_{\text{sur}}[nT_s] \cdot y_{\text{ref}}^*[nT_s - \tau] \cdot e^{-j2\pi f_D n T_s}$$- Swept over 6 range bins (0–5 samples) and 41 Doppler bins (−40 to +40 Hz).

- Peak of the ambiguity function gives the best-fit

(Δτ, f_D)estimate.

- Velocity estimation: Computed from

v = λ·f_D / (2·cos(β/2)), where β was determined from the known geometry (base station at 247 m). - Full heatmap: Stacked results across all 20 files to produce a Doppler frequency vs. time image showing the target’s motion trajectory.

Challenges

- Cross-correlation computation: Naïve triple-loop implementation in MATLAB was extremely slow; required vectorization and careful indexing of delayed reference samples.

- Frequency ambiguity: Multiple signal components in the raw spectrum made DDC center frequency selection critical; verified by inspecting spectrograms before and after processing.

- Phase coherence: Small timing offsets between reference and surveillance channels had to be absorbed by the ambiguity function grid resolution.

Reflection and Insights

This project provided a concrete application of core signals-and-systems concepts — Fourier analysis, filtering, convolution/correlation, and Doppler physics — in a real-world sensing context. The ambiguity function framework elegantly unifies range and velocity estimation into a single 2D search problem, and the MATLAB implementation made the connection between the mathematical formulation and actual computational steps very direct.

The micro-Doppler extension raised interesting questions about how fine-grained body motion (arm swing, breathing) modulates the main Doppler return — relevant to applications like medical monitoring and security sensing.

Team and Role

- Team: Two-person team.

- My Role: Led signal processing pipeline implementation (DDC, filtering, ambiguity function); collaborated on heatmap generation and analysis.