Comparative Analysis of Clustering and Dimensionality Reduction Techniques

Overview

This project, completed for the Statistical Learning for Data Science course, presents a comprehensive comparison of clustering and dimensionality reduction methods: K-means, Soft K-means, PCA, and Linear AutoEncoder. Experiments were conducted on both tabular data (wheat seed dataset) and image data to evaluate each algorithm’s strengths and failure modes across different data types.

Results

Clustering on the Wheat Seed Dataset (210 samples, 7 features, 3 true classes):

| Algorithm | Initialization | Split & Merge | Accuracy Score |

|---|---|---|---|

| K-means (k=3) | Optimized | No | 0.7166 |

| Soft K-means (k=3) | Optimized | Yes | 0.6717 |

| K-means (k=10) | Optimized | No | 0.3750 |

| Soft K-means (k=10) | Random | Yes | 0.6085 |

Key finding: when k matches the true number of classes (k=3), hard K-means outperforms Soft K-means. When k is over-specified (k=10), Soft K-means’s flexible assignments significantly outperform hard K-means.

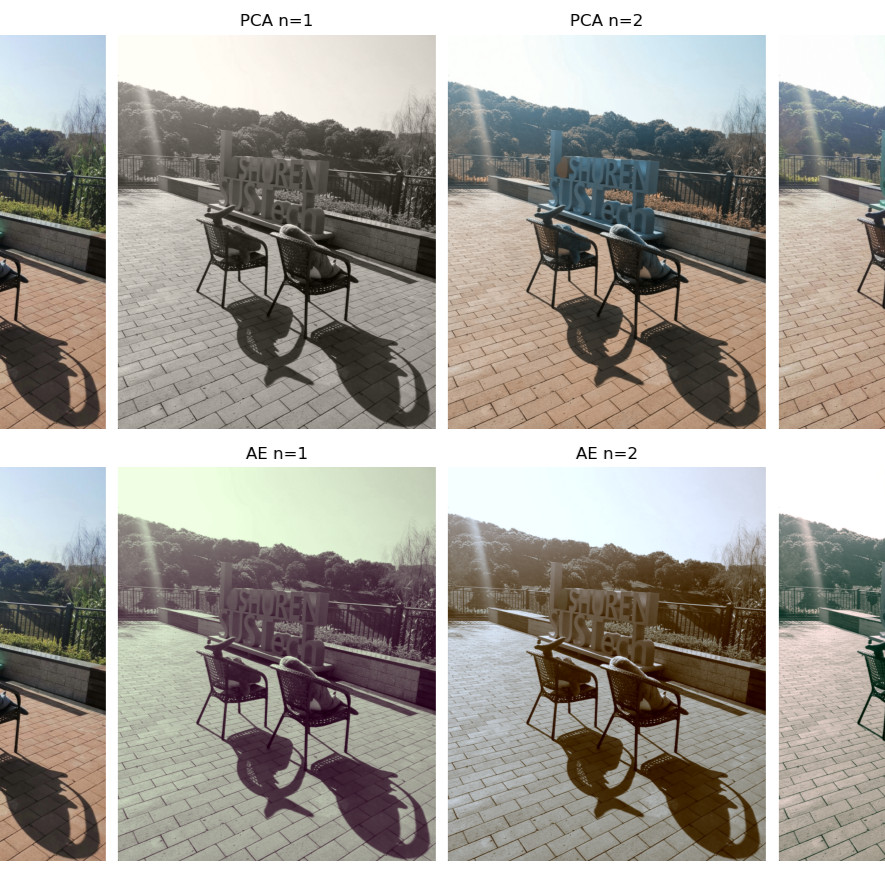

Dimensionality Reduction on Image Data:

- PCA and Linear AutoEncoder produce visually comparable reconstructions for n=1,2,3 principal components.

- When n=3, AutoEncoder loss converges most rapidly, capturing the highest-level structure.

- Subsequent Soft K-means color clustering (k=1,2,3) produced meaningful image compression at all tested dimensions.

Technical Details

- K-means Enhancements:

- Optimized centroid initialization: Selected initial centroids by maximum pairwise distance rather than random sampling, consistently avoiding degenerate clustering.

- Non-local split and merge: Split the largest cluster and merged the two closest; allowed the algorithm to escape local minima, though improvement depended on random factors.

- Soft K-means:

- Responsibility (r_k^{(n)} = \frac{\exp(-\beta d(m_k, x^{(n)}))}{\sum_j \exp(-\beta d(m_j, x^{(n)}))}).

- Explored the effect of β: high β approaches hard K-means; low β causes cluster centers to collapse toward each other.

- PCA:

- Covariance matrix eigendecomposition; top-M eigenvectors used as projection basis.

- Image reconstructed by projecting to M-dimensional subspace and back.

- Linear AutoEncoder:

- Encoder

z = Wx, decoderx̂ = Vz; trained by minimizing MSE reconstruction loss. - With linear activations, mathematically equivalent to PCA; trained via gradient descent.

- Encoder

- Implementation: All algorithms implemented from scratch in Python without ML frameworks for clustering; PyTorch used for the AutoEncoder.

Challenges

- K-means sensitivity to initialization: Random initialization frequently led to incorrect cluster assignments on the wheat dataset. The optimized initialization method (furthest-distance selection) reliably improved results.

- β tuning in Soft K-means: Too low β caused cluster centers to merge; too high β reproduced K-means behavior exactly, removing the benefit of soft assignment.

- AutoEncoder convergence: Loss curves for n=1 were much flatter than for n=3, reflecting that lower-dimensional projections have fewer degrees of freedom to minimize.

Reflection and Insights

The contrast between K-means and Soft K-means under different k values illustrates a fundamental trade-off: hard, definitive assignments are powerful when the number of clusters is known, but soft probabilistic assignments provide robustness when cluster assumptions are violated. The equivalence between a Linear AutoEncoder and PCA — proven analytically and confirmed empirically — demonstrated that gradient-based learning can recover classical statistical results, offering a framework for extending dimensionality reduction to nonlinear settings.

Team and Role

- Solo project: All algorithms implemented, experiments designed, and analysis conducted independently.

Comparative Analysis of Clustering and Dimensionality Reduction Techniques

https://liferli.com/2023/12/05/projects/clustering-analysis/